The OpenAI Agents SDK is a lightweight yet powerful framework for building multi-agent workflows.

- Agents: LLMs configured with instructions, tools, guardrails, and handoffs

- Handoffs: Allow agents to transfer control to other agents for specific tasks

- Guardrails: Configurable safety checks for input and output validation

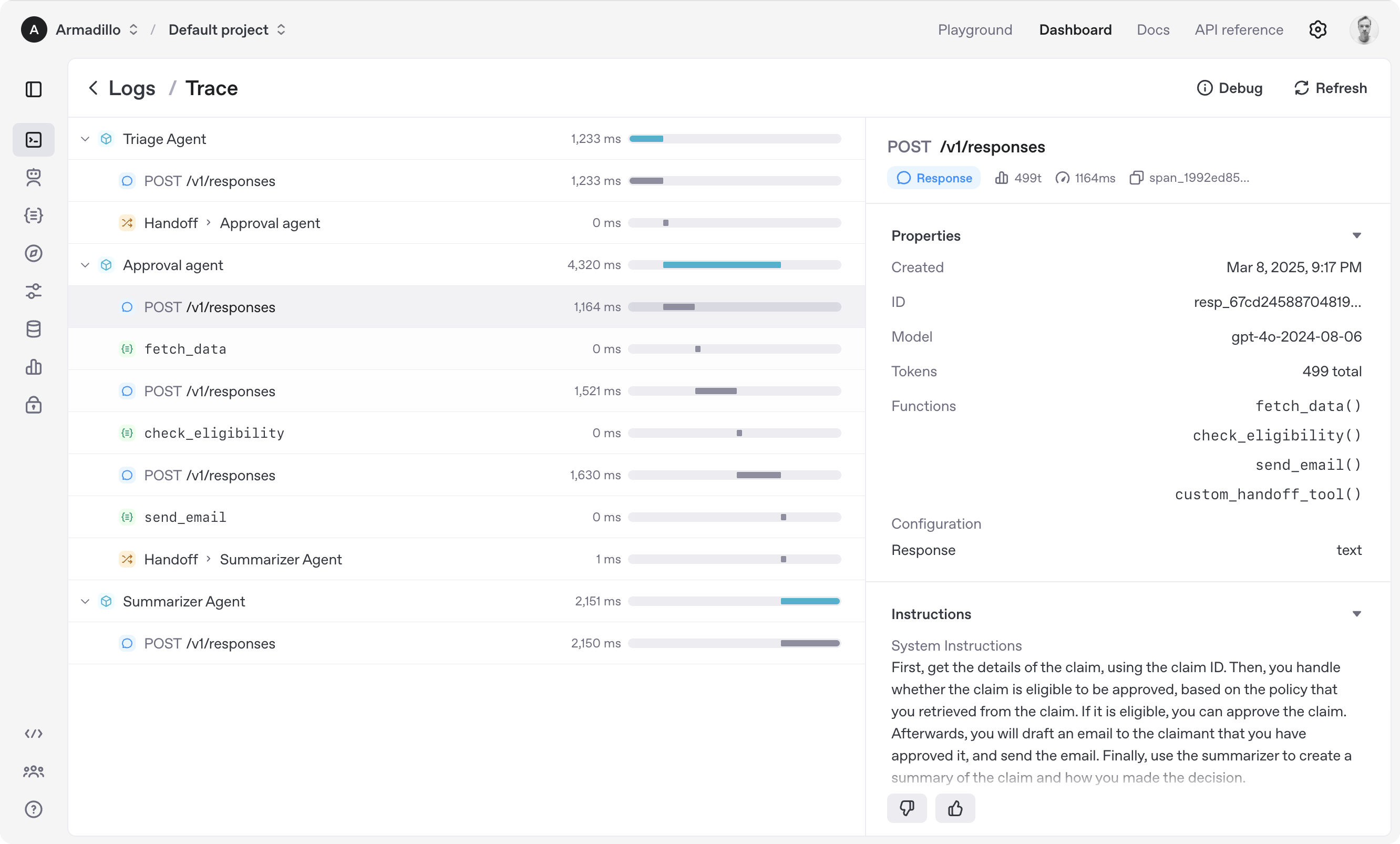

- Tracing: Built-in tracking of agent runs, allowing you to view, debug and optimize your workflows

Explore the examples directory to see the SDK in action, and read our documentation for more details.

Notably, our SDK is compatible with any model providers that support the OpenAI Chat Completions API format.

- Set up your Python environment

python -m venv env

source env/bin/activate

- Install Agents SDK

pip install openai-agents

from agents import Agent, Runner

agent = Agent(name="Assistant", instructions="You are a helpful assistant")

result = Runner.run_sync(agent, "Write a haiku about recursion in programming.")

print(result.final_output)

# Code within the code,

# Functions calling themselves,

# Infinite loop's dance.(If running this, ensure you set the OPENAI_API_KEY environment variable)

(For Jupyter notebook users, see hello_world_jupyter.py)

from agents import Agent, Runner

import asyncio

spanish_agent = Agent(

name="Spanish agent",

instructions="You only speak Spanish.",

)

english_agent = Agent(

name="English agent",

instructions="You only speak English",

)

triage_agent = Agent(

name="Triage agent",

instructions="Handoff to the appropriate agent based on the language of the request.",

handoffs=[spanish_agent, english_agent],

)

async def main():

result = await Runner.run(triage_agent, input="Hola, ¿cómo estás?")

print(result.final_output)

# ¡Hola! Estoy bien, gracias por preguntar. ¿Y tú, cómo estás?

if __name__ == "__main__":

asyncio.run(main())import asyncio

from agents import Agent, Runner, function_tool

@function_tool

def get_weather(city: str) -> str:

return f"The weather in {city} is sunny."

agent = Agent(

name="Hello world",

instructions="You are a helpful agent.",

tools=[get_weather],

)

async def main():

result = await Runner.run(agent, input="What's the weather in Tokyo?")

print(result.final_output)

# The weather in Tokyo is sunny.

if __name__ == "__main__":

asyncio.run(main())When you call Runner.run(), we run a loop until we get a final output.

- We call the LLM, using the model and settings on the agent, and the message history.

- The LLM returns a response, which may include tool calls.

- If the response has a final output (see below for more on this), we return it and end the loop.

- If the response has a handoff, we set the agent to the new agent and go back to step 1.

- We process the tool calls (if any) and append the tool responses messages. Then we go to step 1.

There is a max_turns parameter that you can use to limit the number of times the loop executes.

Final output is the last thing the agent produces in the loop.

- If you set an

output_typeon the agent, the final output is when the LLM returns something of that type. We use structured outputs for this. - If there's no

output_type(i.e. plain text responses), then the first LLM response without any tool calls or handoffs is considered as the final output.

As a result, the mental model for the agent loop is:

- If the current agent has an

output_type, the loop runs until the agent produces structured output matching that type. - If the current agent does not have an

output_type, the loop runs until the current agent produces a message without any tool calls/handoffs.

The Agents SDK is designed to be highly flexible, allowing you to model a wide range of LLM workflows including deterministic flows, iterative loops, and more. See examples in examples/agent_patterns.

The Agents SDK automatically traces your agent runs, making it easy to track and debug the behavior of your agents. Tracing is extensible by design, supporting custom spans and a wide variety of external destinations, including Logfire, AgentOps, Braintrust, Scorecard, and Keywords AI. For more details about how to customize or disable tracing, see Tracing.

- Ensure you have

uvinstalled.

uv --version- Install dependencies

make sync- (After making changes) lint/test

make tests # run tests

make mypy # run typechecker

make lint # run linter

We'd like to acknowledge the excellent work of the open-source community, especially:

We're committed to continuing to build the Agents SDK as an open source framework so others in the community can expand on our approach.

This integration combines the power of Firecrawl for web scraping with OpenAI for information extraction.

- Extract any type of information from any website

- Simple, easy-to-use interface

- Handles natural language prompts

- Install the required packages:

pip install openai firecrawl-py- Set your API keys as environment variables:

export OPENAI_API_KEY=your_openai_api_key

export FIRECRAWL_API_KEY=your_firecrawl_api_keyRun the script:

python firecrawl_agent.pyEnter any prompt like:

- "Extract pricing information from mendable.ai"

- "Find the features of Anthropic's Claude model from anthropic.com"

- "Get the latest news from techcrunch.com"

The script will:

- Extract the website URL from your prompt

- Use Firecrawl to scrape the website

- Use OpenAI to analyze the content based on your specific request

- Display the results

Enter your prompt (e.g., 'Extract pricing information from mendable.ai'): Extract pricing information from mendable.ai

Scraping https://mendable.ai...

Extracting information...

--- Result ---

Mendable.ai offers the following pricing plans:

1. Free Plan

- $0/month

- 100 queries/month

- 1 project

- Basic features

2. Pro Plan

- $49/month

- 1,000 queries/month

- 3 projects

- All features including API access

3. Team Plan

- $199/month

- 5,000 queries/month

- 10 projects

- All Pro features plus team collaboration

4. Enterprise Plan

- Custom pricing

- Unlimited queries

- Unlimited projects

- Custom features and dedicated support

- Python 3.7+

- OpenAI API key

- Firecrawl API key